Glossary

A comprehensive glossary of digital marketing terms and definitions to help you navigate the industry.

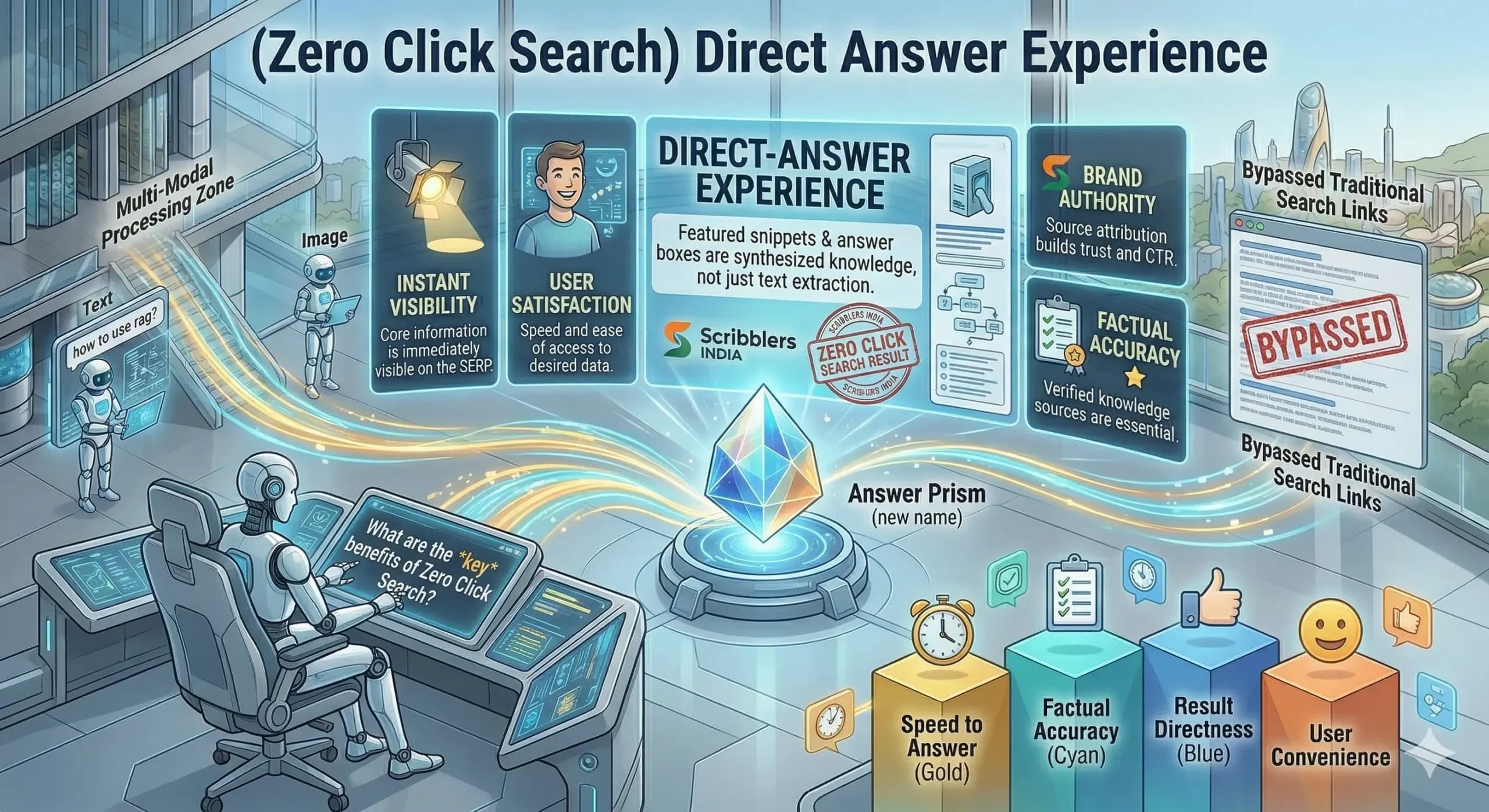

Zero-Click Search

Search engines used to function as directories, pointing users to websites that held the answer. Today, they increasingly function as answer machines. This shift has led to what is known as Zero-Click Search, where users type a question, the search engine delivers the answer directly on the results page, and the user leaves without visiting a single website. This behavior defines zero-click search, and it is reshaping how brands measure visibility, plan content, and approach digital marketing altogether. According to research, around 60% of all Google searches in 2025 end without a click. On mobile devices, that figure climbs to 77%. For brands that depend on organic search as a primary traffic channel, this represents one of the most significant structural shifts in the history of digital marketing. What is a Zero-Click Search and What Causes It? A zero-click search occurs when a user finds the information they need directly on the search results page, without clicking through to any external website. The search engine resolves the query within its own interface, making a website visit unnecessary for the user to complete their information need. Several search features drive this behavior. Google’s AI Overviews synthesize answers from multiple sources and display them at the top of results. Featured snippets present a highlighted block of text extracted directly from a web page. Knowledge panels surface structured information about entities: brands, people, and locations, without requiring the user to visit any individual source website. Local packs, weather cards, calculator tools, and conversion widgets resolve informational and transactional queries entirely within the search environment. Voice search accelerates this trend significantly. When a user asks a smart speaker or mobile assistant a question, the device reads a single answer aloud, with no link provided, making the concept of a click entirely irrelevant to how the content is discovered and consumed. How Does Zero-Click Search Affect Organic Traffic and Content Marketing? Zero-click search creates a direct tension between traditional content marketing goals and the reality of how modern search now works. Brands that built their traffic models on organic clicks from informational content report measurable declines even when their search rankings remain strong. The impact is not uniform across all content types. Informational content — definitions, how-to guides, conversion tools, and factual queries — faces the heaviest disruption because these query types are precisely what AI Overviews and featured snippets are designed to resolve. Transactional, commercial, and navigational queries that require a website visit to complete an action are far less disrupted, which is why a diversified content strategy that covers multiple intent types performs more resiliently in a zero-click environment. Impression share grows while click share shrinks: A brand’s content can appear at the top of a search results page and earn significantly fewer clicks than it would have two years ago, because the AI Overview or featured snippet above it already answered the user’s question before they considered clicking. Brand awareness benefits remain substantial: When a brand’s content is cited in an AI Overview or featured snippet, it gains exposure at the exact moment the user is asking a relevant question. This awareness-level visibility influences brand recall, direct search behavior, and downstream conversions even when no click occurs during the session. Content authority becomes the primary competitive advantage: Zero-click search rewards brands whose content is trusted enough to be selected as the source for a displayed answer. Building that trust through thought leadership content writing and original research delivers compounding brand authority that benefits both zero-click visibility and traditional organic performance simultaneously. What Is the Difference Between Zero-Click Search and AI-Powered Search? Zero-click search is a behavioral outcome: the user gets the answer without clicking. AI-powered search is the mechanism increasingly responsible for producing that outcome, through features like AI Overviews, synthesized Perplexity responses, and ChatGPT direct answers. The two terms are related but not interchangeable in a content strategy context. Scope of the concept: Zero-click search predates AI-powered search by several years. Featured snippets, knowledge panels, local packs, and weather widgets all produced zero-click outcomes long before AI Overviews launched. AI-powered search has dramatically accelerated the zero-click rate, but the trend itself began well before generative AI entered the search experience. Source attribution patterns: Traditional zero-click features, such as featured snippets, typically attribute the answer to a single source and display the URL clearly below the extracted text. AI-powered search responses may synthesize content from multiple sources simultaneously, citing several or none, making attribution more complex for brands trying to accurately measure their zero-click visibility. Query complexity coverage: Traditional zero-click features resolved simple, factual queries most effectively. AI-powered search extends zero-click behavior into more complex, multi-part, and conversational queries that previously required users to visit multiple websites to fully satisfy their information needs. Optimization approach required: Featured snippets and knowledge panels respond to structured data, concise answer paragraphs, and schema markup. AI-powered search also responds to entity authority, original information gain, and multi-platform brand credibility, which is why AEO and GEO strategies extend the optimization framework well beyond traditional zero-click tactics. Measurement framework differences: Zero-click search performance has traditionally been measured through featured snippet wins and impression share in Google Search Console. AI-powered zero-click visibility additionally requires tracking brand mentions in AI-generated responses, citation frequency across platforms, share of voice in AI-mediated discovery, and downstream branded search volume lift as indirect indicators. How Can Brands Adapt Their Content Strategy to Zero-Click Search? Adapting to zero-click search does not mean abandoning organic content investment. It means restructuring how content gets created, measured, and distributed to capture visibility at the answer layer rather than relying solely on click-through traffic as the primary measure of success. The most effective brands in a zero-click environment take a visibility-first approach. They optimize for citation, brand mentions, and authority signals rather than for clicks. A strong content marketing strategy for the zero-click era integrates traditional SEO with AEO and GEO principles, ensuring the brand performs across all layers of the modern search experience

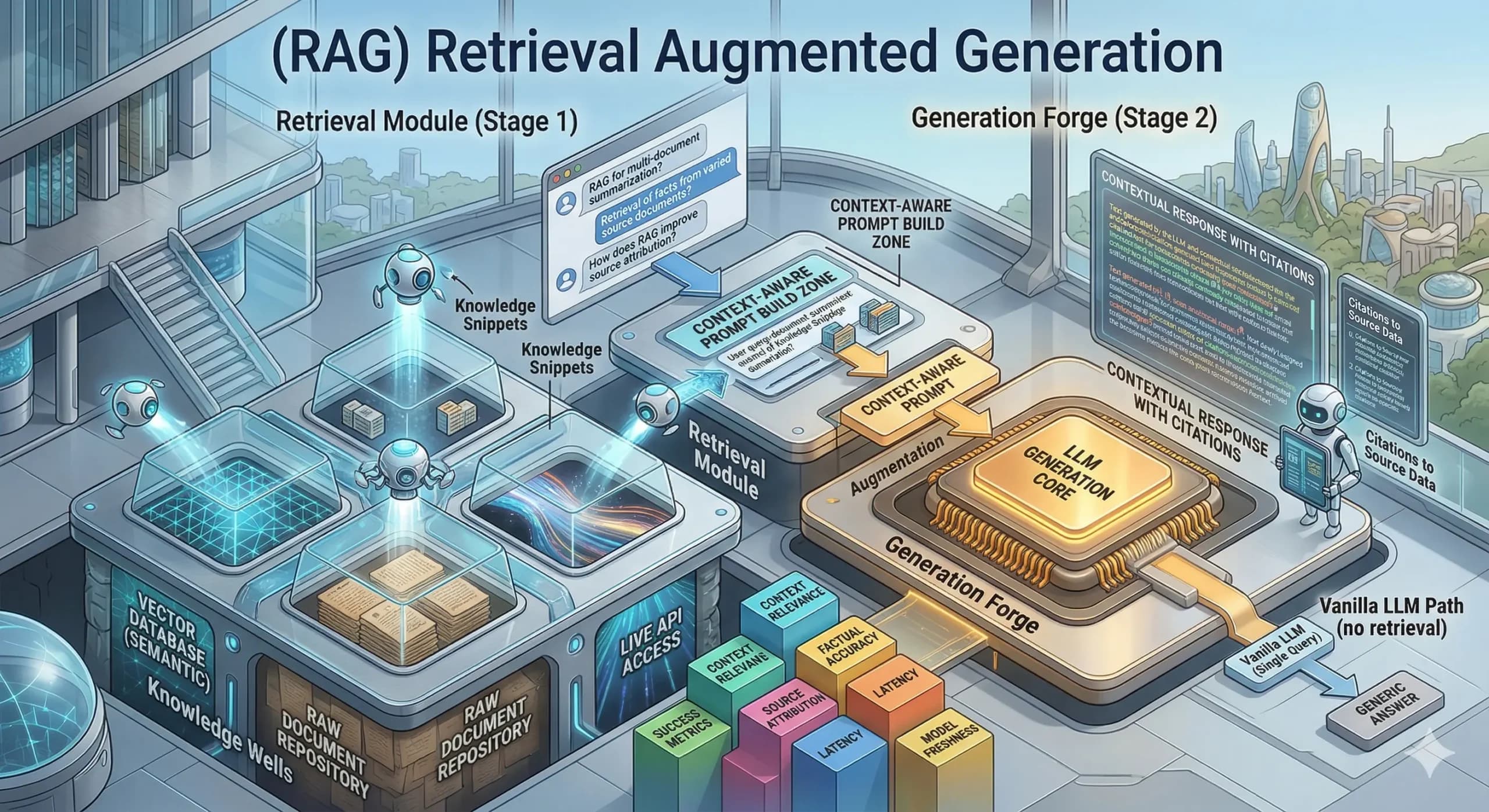

Retrieval-Augmented Generation (RAG)

AI platforms carry a fundamental limitation. They can only respond based on what they absorbed during training. That training data has a fixed cutoff date, which creates a real problem for brands and businesses alike. They need AI systems to deliver accurate, current, and domain-specific answers. Retrieval-Augmented Generation (RAG) solves this problem directly. It connects a large language model to up-to-date external knowledge sources before generating a response. This connection dramatically improves the accuracy and trustworthiness of the AI’s output. For content marketers and digital strategists, understanding RAG is now essential. It determines how AI search platforms decide which sources to cite when answering user queries. What Is Retrieval-Augmented Generation and How Does It Work? Retrieval-Augmented Generation (RAG) is an AI framework. It enhances large language models by connecting them to external knowledge bases before generating a response. Rather than relying only on training data, a RAG system retrieves relevant documents in real time. It then uses that retrieved content to ground the answer it produces for the user. The process follows a clear sequence. A user submits a query. The RAG system converts it into a vector, i.e., a numerical representation the system searches with. The system then scans a knowledge base for documents semantically similar to the query. It selects the most relevant sources and feeds them into the language model alongside the original question. The language model then synthesizes a response. It draws from its training knowledge and the retrieved documents simultaneously. It often cites the external sources that informed its answer. This retrieve-then-generate workflow powers AI search platforms like Perplexity and Google AI Overviews. Well-structured, authoritative content earns citations more consistently than generic or outdated material. Why Does RAG Matter for Content Marketing and Brand Visibility? RAG directly determines which content an AI platform retrieves and cites. It forms the core mechanism behind Answer Engine Optimization and GEO strategies that brands invest in today. When a RAG-powered platform generates a response, it evaluates candidate documents for relevance, authority, recency, and structural clarity. Content that scores well across these dimensions earns a citation in the AI output. Content that is poorly structured or outdated gets excluded from the response pool entirely. This exclusion happens regardless of how well it ranks in traditional search results. Content structure becomes a retrieval signal: RAG systems favor content organized for extraction. They prioritize clear headings, concise answer paragraphs, and direct statements the system can lift and synthesize without losing meaning. A content strategy built around RAG-friendly formatting consistently improves AI citation rates across major platforms. Original information gives the retriever a specific reason to select content: RAG systems have no reason to cite a source that restates what is already available elsewhere. Original research and proprietary data give the retrieval component a specific reason to select a brand’s content over a competitor’s during the scoring phase. Content recency directly improves retrievability: RAG systems actively favor fresh content. Their purpose is to ground AI responses in accurate, current information. Regular content updates directly improve a brand’s position in the retrieval pool of RAG-powered platforms. E-E-A-T signals strengthen the probability of citation: RAG systems retrieve from demonstrably credible sources. Author credentials, cited sources, and third-party brand mentions all increase the likelihood that a brand’s content is selected during the retrieval scoring phase. What Are the Four Key Components of a RAG System? A RAG system operates through four interconnected components. Together, they determine the quality, accuracy, and relevance of the generated output for any given user query. The knowledge base: The external repository that the RAG system queries when a user submits a prompt. It can include internal documents, product databases, web-indexed content, and research papers. The quality and organization of this knowledge base directly determines how accurately the system retrieves relevant content. The retriever: This component converts the user query into a vector. It then searches the knowledge base for semantically similar content. It evaluates relevance mathematically and selects the most contextually appropriate documents to pass to the language model. Stronger retrieval quality leads to more accurate final responses for the user. The integration layer: This component coordinates the overall RAG pipeline. It combines retrieved documents with the original user query through prompt engineering techniques. It instructs the language model to synthesize retrieved information into a coherent, accurate response that accurately represents the source material. The generator: This is the large language model that produces the final response. It simultaneously draws on retrieved documents and its own training knowledge. Models such as GPT-4, Claude, Gemini, and Llama commonly serve as generators. They combine external evidence with broad language understanding to produce accurate, citation-supported outputs. What Are the Benefits and Challenges of Retrieval-Augmented Generation? RAG transforms what large language models can accomplish. It carries both significant advantages and practical challenges that organizations must navigate thoughtfully to achieve reliable results. Benefits of RAG Reduced AI Hallucinations: RAG decreases instances of false information by grounding every response in verifiable, retrieved documents. This approach improves factual accuracy for high-stakes queries in the finance and healthcare industries. Dynamic Knowledge Updates: Organizations can keep their AI systems current without the high cost of retraining a model from scratch. The knowledge base updates independently whenever new information becomes available in the data source. Improved Source Transparency: RAG provides users with specific citations within each generated response to increase overall trust. These citations allow audiences to verify information directly, especially in regulated and high-credibility industries. Cost-Effective Specialization: This technology enables targeted applications by connecting a general-purpose model to a specialized knowledge base. A single model serves multiple industry contexts without requiring separate, expensive training runs. Challenges of RAG Risk of Contextual Misinterpretation: Systems occasionally retrieve factually correct documents that are contextually misleading for the specific query. The language model may then produce a response that combines accurate data with an incorrect conclusion. Dependence on Data Quality: The quality of the final output depends heavily on the organization and structure of the knowledge base. Poorly structured

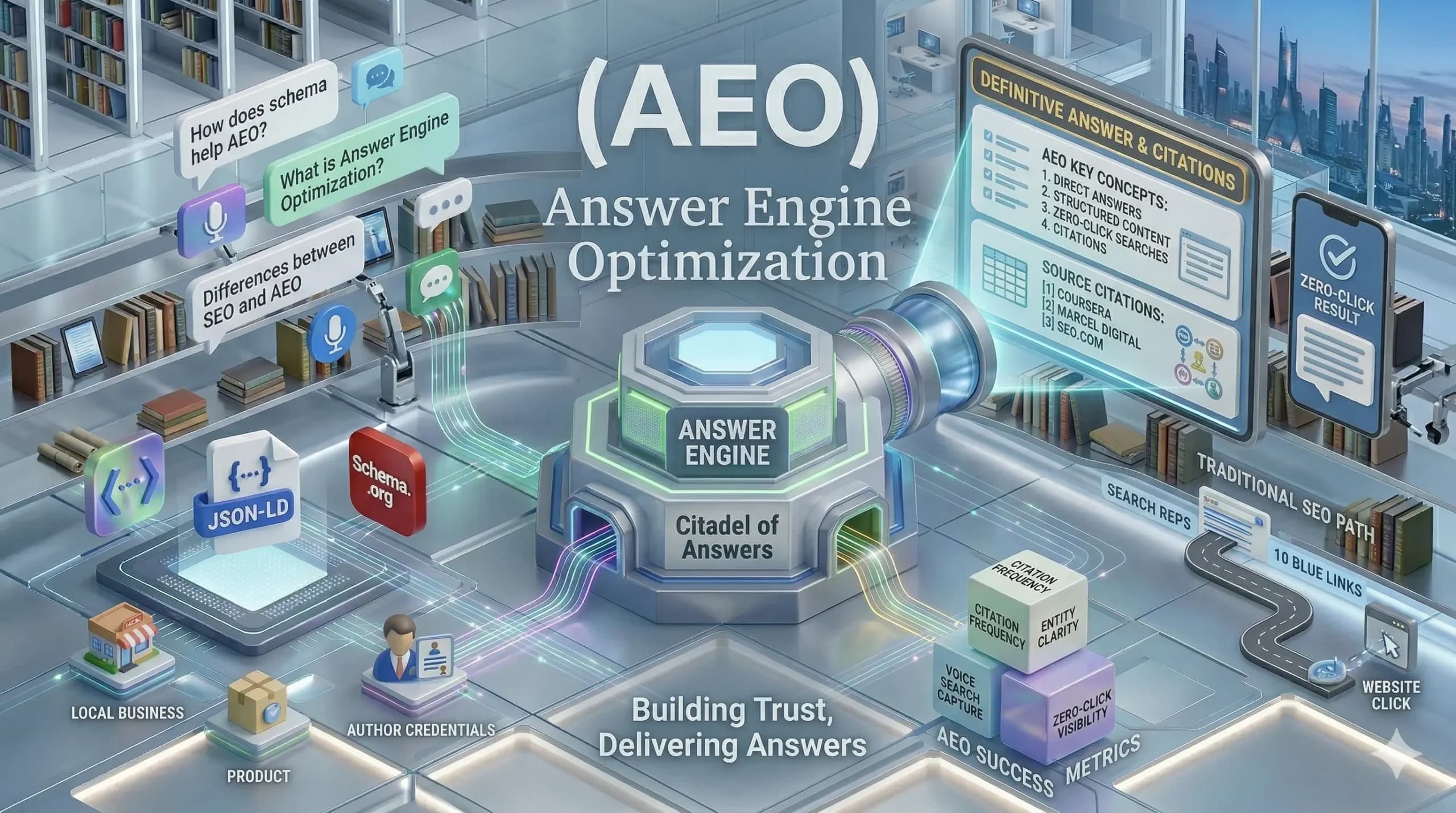

Answer Engine Optimization (AEO)

Search behavior has changed in ways that traditional SEO alone cannot address. Over 60% of Google searches now end without a single click, and platforms like ChatGPT serve more than 800 million users every week. Brands that want to stay visible in this environment need a sharper strategy. Answer Engine Optimization (AEO) is that strategy. It focuses on structuring content so that AI-powered platforms deliver it as a direct answer to user queries, rather than as a link in a results list. For content marketers and digital brands, AEO has become a measurable, high-priority discipline that determines where and how a brand gets discovered. What is AEO and Why Does It Matter Today? Answer Engine Optimization (AEO) is the practice of structuring content so that AI-driven platforms can extract and surface it as a direct, cited answer to a user query. Platforms like Google AI Overviews, ChatGPT, Perplexity, and voice assistants all operate as answer engines. Unlike traditional SEO, which targets ranking positions and website clicks, AEO targets the answer itself. The goal is for a brand’s content to become the source that an AI platform cites, summarizes, or reads aloud when a user asks a relevant question. This shift matters because users today expect instant, trustworthy answers. Voice assistants, AI chatbots, and AI Overviews deliver exactly that, which means brands that do not optimize for answers risk becoming invisible even when their content holds a strong traditional search ranking. How Does Answer Engine Optimization (AEO) Differ from Traditional SEO? AEO and SEO share the same foundation, yet they target different outcomes, measurement frameworks, and content formats in meaningful ways. AEO prioritizes being the source of an answer over earning a click. Traditional SEO measures success through rankings, traffic, and click-through rates. AEO measures success through citations in AI responses, brand mentions in answer engines, and the share of voice a brand holds across AI-powered platforms. Target platform: Traditional SEO targets Google’s ranked link results. AEO targets AI-generated answer surfaces, including AI Overviews, Perplexity responses, voice search outputs, and featured snippets where answers appear above organic results. Content format requirements: Traditional SEO rewards comprehensive, keyword-rich pages. AEO rewards concise, question-forward content that leads with a direct answer in the first 40 to 60 words. This makes it easy for AI systems to extract, synthesize, and deliver to the user. Intent alignment: Traditional SEO ranks pages for broad keyword clusters. AEO demands content that aligns closely with the specific conversational question a user types or speaks. This requires a deeper understanding of natural-language search intent across every topic area. Authority signal weight: AEO places greater emphasis on E-E-A-T signals: experience, expertise, authoritativeness, and trustworthiness. This is because answer engines actively evaluate whether a source is credible enough to be cited in a response that reaches millions of users at once. What Are the Core Components That Drive AEO Success? AEO builds on a set of interconnected content, technical, and authority signals that, together, tell answer engines that a brand is worth citing in their responses. The question-forward content structure is the most fundamental component. Organizing content around the exact questions an audience asks and using those questions as headings allows AI systems to locate and extract answers efficiently. Direct, answer-first writing in the opening sentences of each section signals that the content exists to inform rather than to sell. Structured data and schema markup: These allow answer engines to parse content meaning with precision. FAQPage, HowTo, Article, and Organization schema types signal the nature of content to AI crawlers, improving the likelihood of inclusion in rich results and AI-generated responses across all major platforms. Concise, extractable paragraphs: Paragraphs in the 40 to 60 word range match the format that AI Overviews and featured snippets consistently pull from. Longer, unbroken text blocks are harder for AI systems to summarize and attribute accurately to the correct source. Multi-platform brand presence: Answer engines draw from review platforms, social content, third-party publications, and discussion forums alongside a brand’s own website, which means consistency of brand representation across all surfaces matters significantly for AEO performance. Why Does E-E-A-T Signal Matter for AEO? E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. These four signals determine whether an answer engine considers a source credible enough to cite in a direct response to a user query. Answer engines do not rank blue links. They recommend sources to users who trust those recommendations completely. For an AI platform to cite a brand’s content, it needs clear evidence that the content comes from a genuinely knowledgeable source with a documented track record. Experience: Content that demonstrates first-hand knowledge through case studies, real outcomes, and practitioner insights signals authenticity that AI systems recognize as more reliable than purely theoretical coverage of a subject. Expertise: Clear author profiles, bylines linked to credible sources, and content that demonstrates depth rather than breadth show answer engines that the content comes from someone with genuine authority in the specific subject area being covered. Authoritativeness and Trustworthiness: Third-party mentions, backlinks from reputable sources, accurate statistics, and consistent publishing history build the entity authority that AI platforms use to assess whether a brand deserves a citation in a generated response delivered to users. What Are the Key AEO Strategies for Digital Marketers? Effective AEO requires a deliberate shift in how content is planned, structured, and distributed across channels. Brands that lead with answers consistently perform better in AI-generated answer surfaces than those that bury the response in long introductions. Placing a direct, complete response to the query in the first paragraph of each content section aligns with how AI platforms retrieve and display information. This approach also signals to the platform that the content immediately resolves the user’s question, rather than requiring them to scroll through multiple paragraphs. Build content hubs around specific questions: Organize service pages, blog posts, and glossary content around the precise natural-language questions an audience asks. Tools like Google’s People Also Ask boxes, search autocomplete, and branded search

Generative Engine Optimization (GEO)

Search has changed fundamentally. Millions of users today turn to AI-powered platforms like ChatGPT, Perplexity, and Google AI Overviews to get direct answers rather than scrolling through a list of links. Brands that want to stay visible in this environment need a sharper strategy. Generative Engine Optimization (GEO) is exactly that strategy. It focuses on structuring content so that AI platforms can retrieve, understand, and cite it when synthesizing answers for users. For digital marketers and content creators, GEO has become a core pillar of any serious, future-ready visibility strategy. What Is Generative Engine Optimization and How Does It Use RAG? Generative Engine Optimization (GEO) is the practice of creating and structuring content so that AI-driven platforms can surface and cite it within their generated responses. The goal is not a ranking position but inclusion in the AI’s authored answer. Most AI search platforms rely on a process called Retrieval-Augmented Generation, or RAG. The system first retrieves relevant documents from an index or the live web, then passes those documents to a Large Language Model (LLM) to generate a synthesized, coherent response for the user. Content that is authoritative, clearly structured, and information-rich scores higher during that retrieval stage. This means a brand does not need to hold the top organic ranking, it needs to be credible and useful enough for an AI system to select it as a trusted reference source. Why Is GEO Important for Your Digital Presence? AI search platforms are permanently reshaping how audiences discover brands, and businesses that do not adapt stand to lose meaningful visibility across the channels that matter most. It creates reach beyond traditional search results: AI platforms like ChatGPT now serve hundreds of millions of users every week. A brand that gets cited in AI-generated responses gains exposure to audiences who may never interact with a conventional search results page, opening entirely new discovery channels. It attracts high-intent, conversion-ready audiences: Visitors who arrive through AI referrals tend to convert at significantly higher rates than standard organic traffic. These users have already received a recommendation from a trusted AI system, which means they arrive with a much stronger intent to engage or purchase. It strengthens brand authority across platforms: When AI systems consistently cite a brand as a reliable source, that pattern compounds over time. It reinforces the brand’s authority with audiences across multiple platforms and positions it as a recognized expert in its category. It future-proofs content marketing investments: As AI-generated summaries replace traditional search results for a growing share of queries, brands with a strong GEO foundation will maintain their visibility. Brands that delay this transition risk watching their organic reach erode with limited options to recover it quickly. What Are the Key Components of Generative Engine Optimization (GEO)? GEO is a system of interconnected signals that, together, tell AI platforms whether a brand is worth citing. Here are the key components of Generative Engine Optimization: Content authority and information gain: AI platforms prioritize sources that offer original, verifiable insights. Proprietary data, expert perspectives, cited statistics, and first-hand analysis give an AI system a specific, citable reason to reference a particular source over a competitor that publishes only generic information. Semantic clarity and logical structure: Content must be written in direct, natural language with well-organized formatting. Clear headings, concise paragraphs, and specific answers enable AI systems to accurately extract and reassemble information during synthesis without distortion. Entity and sentiment accuracy: AI platforms build associations between brands, products, and attributes based on how content is written across the web. Ensuring that a brand’s content reinforces accurate, positive attributes helps AI systems characterize the brand correctly in generated responses. Technical accessibility for AI crawlers: GEO cannot function if AI systems cannot access a website’s content. Clean site architecture, proper robots.txt configuration, schema markup, and fast page load times all contribute to a site’s retrievability by AI-powered crawlers and indexing systems. Multi-platform brand presence: AI models draw from a wide range of sources — websites, review platforms, forums, social media, and third-party publications. A consistent, authoritative brand presence across all of these channels strengthens the overall signal that an AI system uses to evaluate credibility. How Does Generative Engine Optimization (GEO) Work in Digital Marketing? Generative Engine Optimization follows a retrieve-then-synthesize workflow that is fundamentally different from that of traditional search engines. Understanding this process is what separates a well-executed GEO strategy from one that simply borrows SEO tactics and relabels them. When a user poses a question to an AI platform, the system scans its index or the live web for the most semantically relevant documents. This is not keyword matching; it is concept matching. A piece of content about content strategy for SaaS brands may surface in a response about B2B digital marketing even if that exact phrase does not appear in the article. Relevance is determined by meaning, not by a specific string of words. Once the AI retrieves its candidate sources, it evaluates each one for authority, recency, factual accuracy, and structural quality. Sources that are clear, well-cited, and information-dense score higher in this evaluation. This is the stage where optimized content earns its advantage: it gets selected, while generic, thin, or poorly structured content is excluded from the synthesis pool entirely. In the final stage, the AI generates a unified response and attributes portions of it to specific sources via citations or footnotes. Brands whose content is structured for extraction with strong opening statements, clear entity definitions, and original data points are likely to receive an explicit citation in that final response, which is the primary visibility goal of an effective GEO strategy. What Are the Benefits and Challenges of GEO in Content Marketing? GEO presents a significant opportunity for brands willing to invest in it, though the path forward comes with real challenges that require careful navigation. Here are the key benefits of GEO in content marketing: Benefits Brands cited in AI-generated responses gain visibility in a discovery channel that now

Large Language Model (LLM)

Large Language Models (LLMs) power the AI tools that millions of users now rely on every day. This ranges from AI search platforms and writing assistants to customer support systems and content strategy tools. Understanding how these models work is no longer limited to data scientists and developers. Marketers, content creators, and brand builders must now learn what Large Language Models are and how they shape digital experiences, because this knowledge is essential for staying relevant and competitive in today’s AI-driven landscape. What Is a Large Language Model (LLM) and What Can It Do? A Large Language Model (LLM) is a type of AI that is trained on massive volumes of text data. This data is drawn from books, websites, articles, and other sources, enabling the model to understand and generate human language at scale. These models learn by recognising patterns, context, and relationships between words, drawing from billions of examples. LLMs are capable of much more than simple keyword matching. They understand the meaning behind language, which allows them to summarise documents, answer nuanced questions, generate original content, translate languages, and assist with tasks that previously required significant human effort. The most well-known examples include OpenAI’s GPT-4, Google’s Gemini, and Anthropic’s Claude. Each of these models contains billions of parameters that function as the model’s accumulated knowledge and reasoning capabilities. This enables the model to generate responses that feel natural and contextually appropriate. Why Is Pre-Training LLMs So Important? Pre-training is the foundational stage where an LLM builds its core understanding of language, facts, and reasoning. This occurs before the model is customised for any specific task or industry. Establishes the model’s knowledge base: During pre-training, the LLM is exposed to trillions of words from diverse sources. This exposure allows the model to absorb grammar, factual information, linguistic patterns, and contextual reasoning, which inform every response it generates afterward. Determines the model’s strengths and limitations: The quality, diversity, and volume of pre-training data directly shape what a model can do well and where it may fall short. A model trained on narrow or low-quality data will produce limited, unreliable outputs, regardless of how much fine-tuning follows later. Makes fine-tuning faster and more effective: Pre-training provides the model with a broad language foundation. Specialised fine-tuning can then build on this foundation. Organisations that fine-tune a pre-trained model on industry-specific content can achieve high accuracy with much less data than would be required to train from scratch. Shapes how AI tools serve content and marketing teams: LLMs that power AI search and content platforms are built through pre-training processes. This defines their ability to understand intent, generate relevant responses, and cite authoritative sources. This is why content quality and structure are crucial to how these models represent a brand. What Are the Key Types of Large Language Models (LLMs)? LLMs vary significantly in their architecture, accessibility, and intended purpose. Understanding these differences helps marketers and content teams select the right tools for their goals. General-purpose LLMs: These models, such as GPT-4 and Gemini, are trained on broad datasets covering virtually every topic. They handle a wide range of tasks from content generation to Q&A, making them the default choice for most marketing and content applications. Domain-specific LLMs: These models are fine-tuned on industry-specific data, such as legal texts, medical literature, or financial reports. As a result, they produce more accurate outputs for specialised fields where generic models may lack the depth or precision required for professional use cases. Open-weight LLMs: Models like Meta’s LLaMA and Mistral release their weights publicly, allowing developers to inspect, modify, and deploy them. This transparency accelerates innovation and gives organisations greater control over how the model is configured for their specific needs. Instruction-tuned LLMs: These models are specifically trained to follow natural language instructions from users. They power most consumer-facing AI tools, including writing assistants and chatbots, because they reliably align their outputs with what users are actually asking for. Multimodal LLMs: The latest generation of models can process and generate text, images, audio, and other data types within a single system. These models are expanding AI capabilities in content production, creative campaigns, and multi-format digital marketing workflows. How Do Large Language Models (LLMs) Actually Work? Large Language Models are built on a neural network architecture known as the transformer. This architecture processes text by breaking it into smaller units called tokens, which may be words, word fragments, or characters. The model then analyses the relationships among all tokens simultaneously, rather than reading them one at a time. At the core of the transformer is a mechanism called self-attention. This allows the model to weigh the importance of different words relative to one another, regardless of how far apart they appear in a sentence. The result is that an LLM can understand context and produce coherent, nuanced responses instead of generic or disconnected outputs. When a user submits a prompt, the model encodes the input and processes it through multiple neural network layers. It then generates a response by predicting the most likely next token based on all prior contexts. This process, called inference, happens in milliseconds and repeats until the full response is complete. The model draws on everything it absorbed during its pre-training phase. What Are the Benefits and Challenges of Large Language Models (LLMs)? LLMs offer powerful advantages for content and digital marketing teams. However, adopting them effectively requires navigating a set of practical challenges. Benefits of LLMs LLMs greatly accelerate content production, allowing marketing teams to generate drafts, summaries, and research at a pace that would be impossible through manual effort alone. These models make it possible to personalise messaging at scale. Brands can tailor their communication for different audience segments without increasing the manual workload for writers and strategists. LLMs power the AI search platforms that increasingly determine how brands are discovered. Therefore, understanding these models is a core part of any serious content strategy. Organisations that integrate LLMs into their workflows consistently report improvements